🩺 The Pulse: Pre-Clinical AI Mental Health Support and Conversational AI for Anxiety

Plus: Tracking clinic to-dos consistently and automatically

1. Triage – Your Fortnightly Rundown

Hi Pulse Readers - this week, we’re diving into:

Whakarongorau Aotearoa's new AI mental health Welcome service,

what a 1000-people RCT reveals about conversational AI compared with face-to-face group therapy,

and how you can automate your daily to-do list with Heidi Tasks.

2. Case Study – Your Fortnightly Practical

Video Source: Hendrix Health

Automate a To-do List Using Heidi Tasks

Case Presentation: The admin pile has been getting smaller for Dr Harry. Using Heidi, his volume of leftover work at the end of each day has noticeably dropped.

Today, he wrapped up a busy morning clinic. Across 15 patients, there were referrals to make, repeat bloods to order, results to chase, and a couple of patient callbacks he had promised to do himself. He had a mental list, a few sticky notes, and a vague sense that he had probably forgotten something.

Dr Harry wonders whether Heidi can help him track these action / to-do items consistently, without relying on memory or paper.

Approach: Capture follow-up actions automatically from each consultation with Heidi Tasks, then review and manage them from a single “Master” list across patients.

1. Open the Tasks panel during or after a session

Click the Tasks button at the bottom right of your Note to open the Tasks panel on the side. Heidi will have already identified to-do items from the transcript and listed them automatically. You can adjust how the panel behaves and turn auto-generation on or off in your settings.

2. Review what Heidi has captured, organised by category

Each task is auto-categorised into one of five categories. Order covers things like blood tests or imaging requests. Coordinate covers referrals to specialists or allied health. Communicate covers patient callbacks, results delivery, and reminders. Document covers letters, summaries, or reports that still need to be drafted. Action covers general tasks that don't fall neatly into the above categories. You can edit a task title, change its category, or add a manual task by clicking "new task" at the bottom of the list.

3. View the evidence or generate a document directly from a task

Click the three dots button on the right of any task. From there, you can view the task evidence (which links you to the exact point in the transcript where Heidi picked up the task), or generate a document based on the task in one click. Heidi will automatically mark the task as Done once the document is ready. This can be undone if needed. As always, as the clinician you remain responsible for reviewing the evidence and confirming clinical appropriateness before actioning any task or sending any document.

4. Manage outstanding to-dos through the “Master” Task List

Navigate to your “Master” Task List by clicking the Task button on the very left. This list shows every outstanding to-do across all patients and sessions in one place, so you can work through your tasks at the end of the day without scrolling through individual notes. Tick items off as you complete them, or delete tasks that no longer apply.

Note: Heidi Tasks is currently in a phased beta rollout. Enable it by turning on Heidi Labs in Preferences.

Outcomes: With Heidi Tasks, every referral, test order, callback, and outstanding letter is already captured and categorised by the end of his morning clinic. Dr Harry spends the last 20 minutes of his day working through the Master Task List, rather than re-reading every note to remind himself what he meant to do. The risk of a forgotten test result or an unsent referral is significantly reduced.

This is very helpful for a busy GP managing dozens of small to-dos across a full day of patients.

Disclaimer: Hendrix Health is the official New Zealand partner for Heidi Health.

3. The Pulse - Your Fortnightly Update

Whakarongorau Aotearoa Launches AI Welcome Service for Mental Health and Family Harm Helplines

Whakarongorau Aotearoa (New Zealand Telehealth Services) will launch a Microsoft Azure AI-powered Welcome service in May 2026 to support people contacting its helplines, while they wait to connect with a trained counsellor. The service will first deploy across SMS to the national 1737 Need to talk? helpline, and webchat for the Whakarongorau-run Women's Refuge service.

Image Source: geneticliteracyproject.org

Whakarongorau handles more than 7,000 interactions daily across health, mental health, and social services, reaching over 735,000 people in Aotearoa annually. Mental health demand is rising sharply: at-risk contacts have grown 153 percent since 2019, and the average session now takes around 50 percent longer.

When someone makes contact, the Welcome service identifies itself as AI and lets the user choose to engage or wait in silence. It gathers contact details and the reason for contact, checks in on how the person is feeling, and offers non-clinical support such as breathing techniques. By the time a kaimahi (staff member) joins, they have context to focus on care from the first message.

Key Features:

Clear AI disclosure: Users are told upfront they are interacting with AI and can choose to engage or wait in silence.

Non-clinical scope: The service does not provide clinical guidance, diagnosis, or medical advice, using empathetic language to hold the person until a counsellor is available.

Context handover: Counsellors join the conversation with contact details, reason for contact, and emotional state already captured, reducing time spent on basics.

Co-designed and tested: Developed with clinical oversight and frontline staff input, then tested with mental health service partners and people with lived experience.

Implications for the Health System and Clinicians: For New Zealand’s mental health and crisis support sector, this is a practical example of AI absorbing pre-clinical work that traditionally consumes counsellor time at the start of each interaction. The narrow, non-clinical scope to use AI to keep people engaged while they wait, is the key design choice.

One caution is that the service has not yet launched and no outcomes data is available. The real test will be whether it holds up safely across diverse users in distress, and whether wait-time experience and counsellor workload measurably improve once live.

Conversational AI in Mental Health: What a 995-Student RCT Reveals

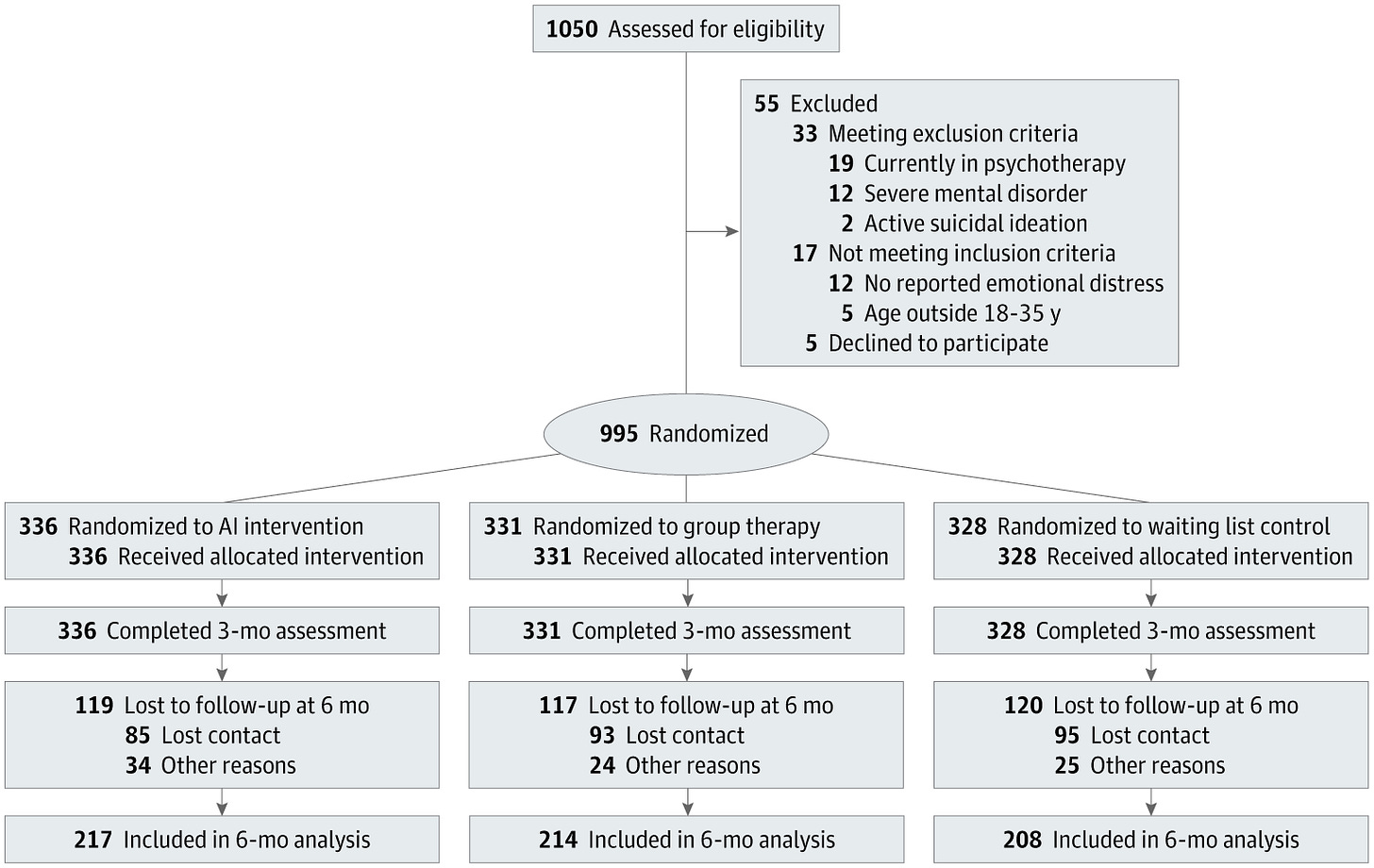

A randomised clinical trial published in JAMA Network Open compared a conversational AI mental health platform with face-to-face group therapy and a waiting list control among 995 university students reporting psychological distress in Israel.

Participants were assigned to a 12-week AI platform (Kai, KAI.AI Inc), 12 weekly 90-minute group therapy sessions led by licensed psychologists, or a waiting list. The AI drew on multiple therapy and mindfulness techniques, with users encouraged to engage at least 3 times weekly. Those with active suicidality, current psychotherapy, or severe mental disorders were excluded.

Image Source: Journal of the American Medical Association (JAMA)

Key Findings:

Greater anxiety reduction with AI: The AI group showed significantly greater anxiety reduction than both group therapy and the waiting list. Group therapy did not differ significantly from control group.

Clinical transition rates: Among participants meeting baseline clinical anxiety thresholds, 57.9% in the AI group moved into the non-clinical range, compared with 14.4% in group therapy and 9.8% in the control group.

Depression, well-being, and life satisfaction: All three outcomes favoured AI over the waiting list at 12 weeks and 3 months, with smaller and less consistent differences between AI and group therapy.

No effect on PTSD: No significant differences emerged between any group at either time point, suggesting the platform did not address trauma-related symptoms.

Therapy-seeking intention fell with AI: Intention to seek therapy declined from 29.8% to 23.5% in the AI group, remained stable in group therapy, and rose to 37.0% in the control group.

Implications for Healthcare Systems:

The results suggest conversational AI may have a role as an early-intervention tool for mild-to-moderate distress, particularly where access to face-to-face care is challenged. Here are some limitations: outcomes were self-reported, participants were university students in one country, attrition reached 35% at 3 months, and PTSD was unaffected. The drop in therapy-seeking intention warrants caution, as it may reflect genuine recovery or unmet need that goes unescalated.

Read the full trial in JAMA here.

4. Vitals – Quick Bytes

Victoria Expands Virtual Hospital Pilot to 400 Patients

The Victorian Government is expanding its first Virtual Hospital pilot, jointly led by The Royal Melbourne Hospital and Austin Health. For heart failure and post-cardiac surgery patients, the programme uses video, phone, and remote monitoring to deliver hospital-level specialist care at home and in regional hospitals through virtual wards and ward rounds; and a virtual foetal medicine service with the Royal Women's Hospital. There is also a planned neurology programme in partnership with Northeast Health Wangaratta. Since December 2025, more than 260 patients have been treated and over 1,000 hospital bed days saved, with the pilot now scaling to 400 patients by the end of June. The figures come from early government announcements rather than independent evaluation, with a formal review scheduled for June 2026. (premier.vic.gov.au, thermh.org.au)

Frontier LLMs Still Stumble on Differential Diagnosis, Large Benchmarking Study Finds

A cross-sectional study in JAMA Network Open tested 21 off-the-shelf large language models on 29 standardised clinical vignettes from the MSD Manual, scoring 16,254 responses in total. This included GPT-5, Claude 4.5 Opus, Gemini 3.0, and Grok 4. Using a new multidimensional benchmark called PrIME-LLM, the authors found that reasoning-optimised models scored higher than non-reasoning ones, and all models did best on final diagnosis and management. However, failure rates for differential diagnosis were over 80% across every model, while final diagnosis failure rates stayed below 40%. The authors conclude that current LLMs jump to single answers too quickly, and cannot yet be trusted for unsupervised, patient-facing decisions. The caveat is that the study tested base models only. Tools that retrieve from trusted sources (such as Heidi Evidence) were not tested, which may lift real-world performance.

We’d love to hear your thoughts, so join the conversation by leaving a comment below:

Stay tuned for more insights in the next edition of The Pulse.

Have a great day & see you in two weeks!

Your Hendrix Health Team